AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

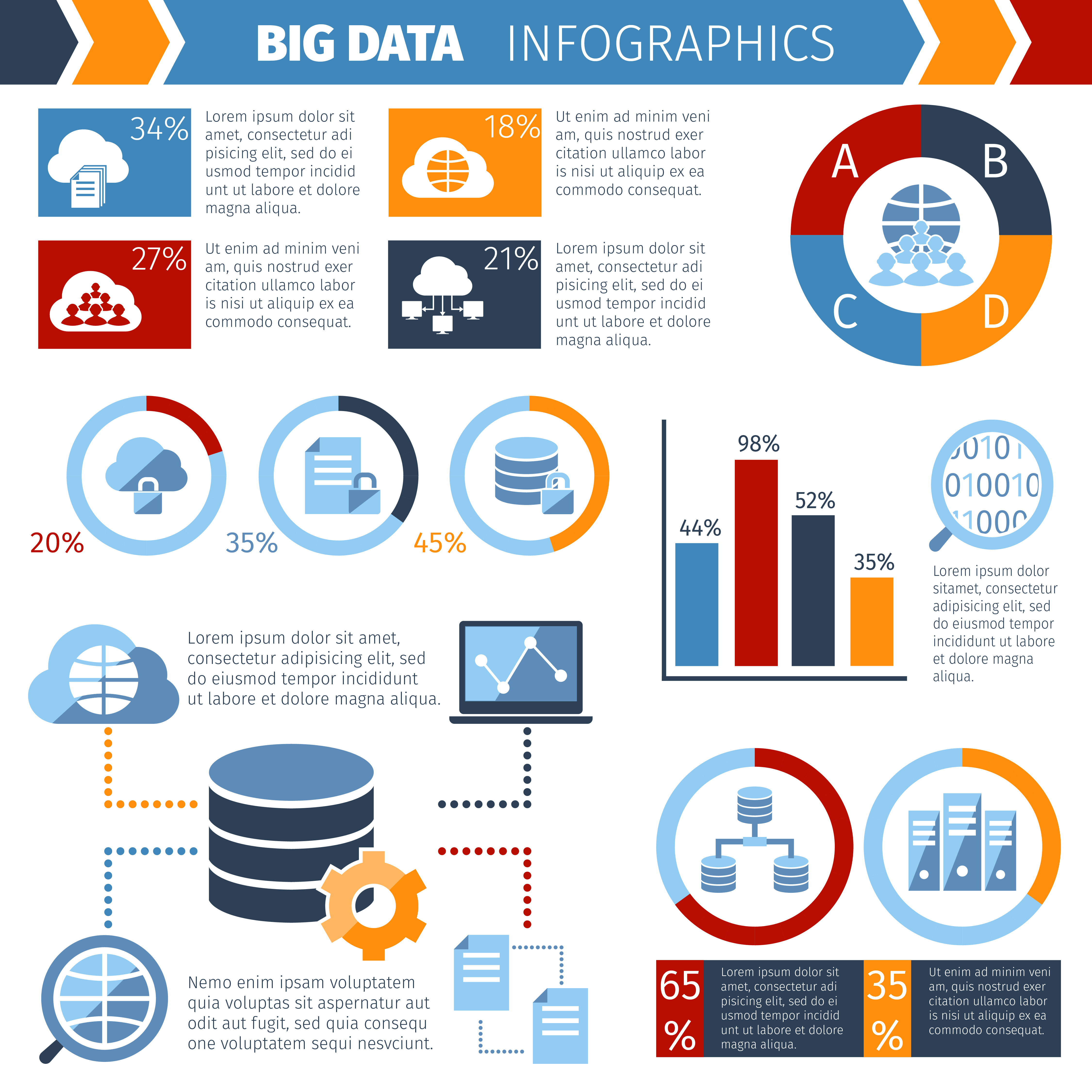

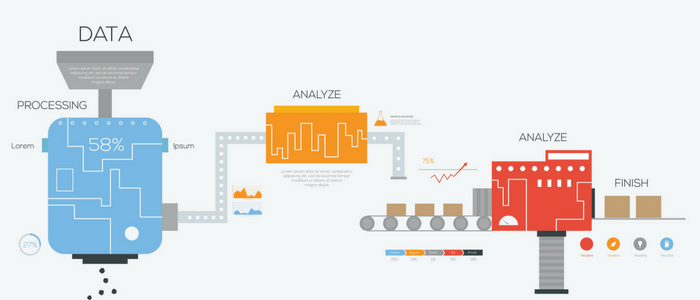

Data processing12/18/2023 Latency delays time to insight, leading to lost value and missed opportunities. But batch processing introduces latency-time between when data is generated and when it is available for analysis. Batch processing to continuous processingīatch processing updates data on a weekly, daily, or hourly basis, ensuring good compression and optimal file sizes. You can load the raw data and transform it later, once the requirements are understood. Modern data pipelines use the limitless processing resources of the cloud so you don’t need to prepare data before you load it. Modern data pipelines now extract and load the data first, and then transform it once the data reaches its destination ( ELT rather than ETL). When streaming data sources came into existence and businesses recognized the need to make use of the massive amounts of data being generated in a variety of formats, ETL workflows no longer sufficed. These traditional ETL operations used a separate processing engine, which involved unnecessary data movement and tended to be slow, and weren’t designed to accommodate schemaless, semi-structured formats. Data was processed and transformed outside of the “target” or destination system via an extract, transform, and load (ETL) workflow. When data was only coming from enterprise resource planning (ERP), supply chain management (SCM), and customer relationship management (CRM) systems, it could be loaded into pre-modeled warehouses using highly structured tables. Let’s look at three major changes that have created new demands on data processing. With the rise of big data, and as data processing has shifted from on-premises to cloud-based systems, the data pipeline has developed to support the needs of new technologies. Elements of data processing may occur either before data is loaded into the warehouse or after it has been loaded. Although the method may vary depending on the data source and use case, data processing always follows a series of prescribed steps.ĭata processing is a key component of the data pipeline, which enables the flow of data from a source into a data warehouse or other end destination. Raw data sources may include social media pages, websites and applications, POS systems, and IoT sensors. The term data processing describes the standardized sequence of operations used to collect raw data and convert it into usable information. In this post, we’ll explain what data processing is and explore the changes that have created new demands on data processing. Fast and effective data processing is essential for a company that wishes to be data-driven and to support operations involving data.

For this reason, raw data must be cleaned and translated into a form that’s usable for analysis. Raw data is generated in different formats and is not validated or organized in any way.

Data is only as valuable as it is usable.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed